Choosing the right gaming mouse is harder than it should be

This project explores how user preference, physical constraints, and performance metrics can be combined to design a human-centered recommendation system for gaming mice. Rather than optimizing for raw performance alone, the goal was to understand how comfort, hand dimensions, and subjective preference influence user satisfaction — and to translate those insights into a system capable of recommending gaming mice to new users.

Users face a genuinely difficult decision:

- Dozens of mice with nearly identical specifications

- Limited ability to test devices before purchasing

- Mismatch between hand size, grip style, and mouse shape

- Reviews that emphasize specs rather than lived experience

From a design perspective, the challenge was to capture meaningful user signals beyond raw performance, respect subjective comfort and preference, and build a recommendation system grounded in human experience — not just optimization.

How can comfort, physical fit, and subjective preference be combined into a recommendation system that helps users find the right gaming mouse — before they buy it?

Who this is for, and what I was responsible for

Primary Users

- PC gamers with varying hand sizes and grip styles

- Users seeking comfort and long-term usability rather than peak performance alone

Stakeholders

- Researchers evaluating human-centered AI systems

- Designers interested in ergonomic and interaction-driven recommendations

I was responsible for the project end-to-end:

- Designing the research questions and experimental protocol

- Submitting and managing the IRB approval process

- Recruiting participants and conducting interviews

- Designing the data collection instruments — surveys, rankings, and performance tasks

- Analyzing results and designing the recommendation logic

- Interpreting findings through a UX and HCI lens

Capturing physical and behavioral data across 14 gaming mice

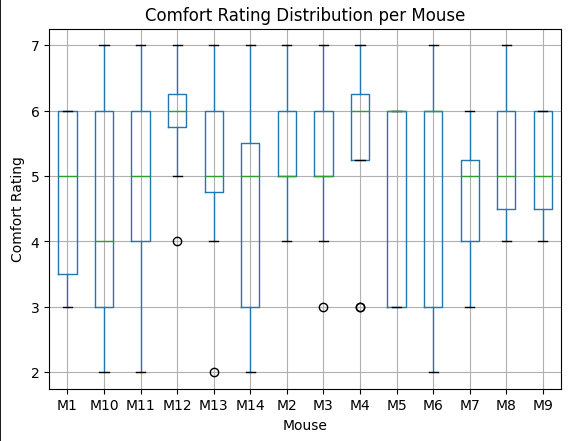

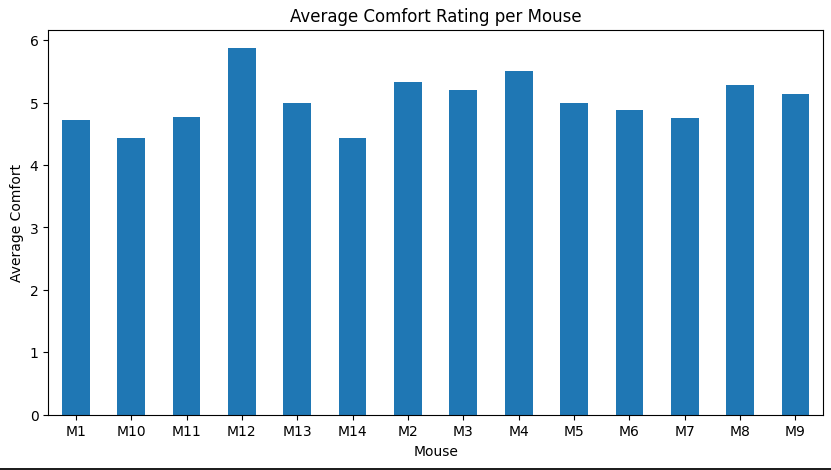

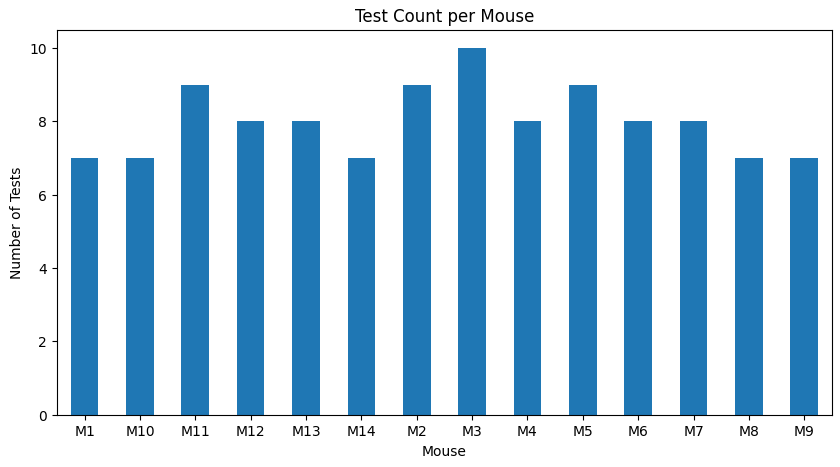

The study involved in-person interviews with participants, controlled experimental testing of 14 gaming mice, surveys measuring comfort, willingness to use, and subjective preference, and performance data collected through standardized Aim Lab tasks.

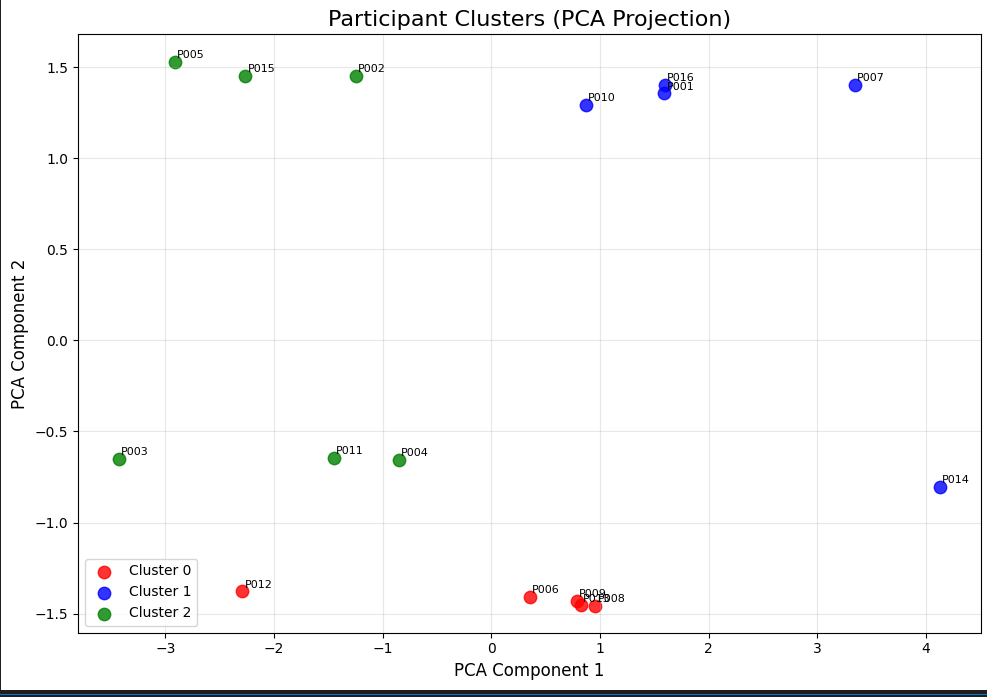

16 participants each tested 7 of the 14 mice, which were clustered based on size and weight and assigned to participants based on coverage across the experiment.

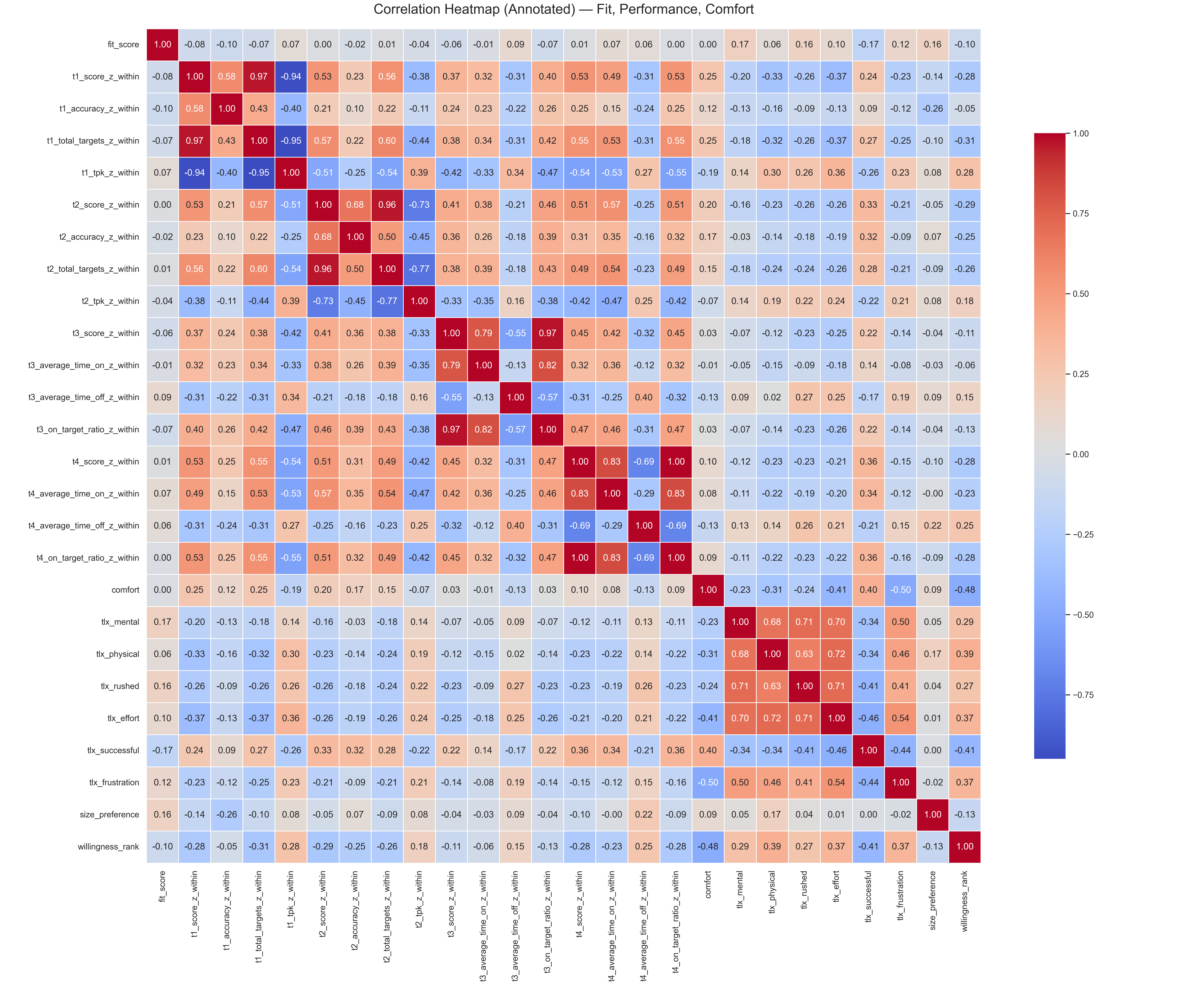

Three key insights shaped the system design:

- Comfort and preference did not always correlate with performance. Users often preferred mice that felt better even when performance metrics were similar.

- Hand dimensions and grip style shaped user experience. Users with similar hand measurements tended to favor similar shapes.

- Users could articulate discomfort more reliably than performance differences. Qualitative feedback was critical for understanding why a mouse was rejected.

These insights reinforced the importance of designing a system that treats users as experiencing bodies, not abstract data points.

An incomplete block design grounded in three clear goals

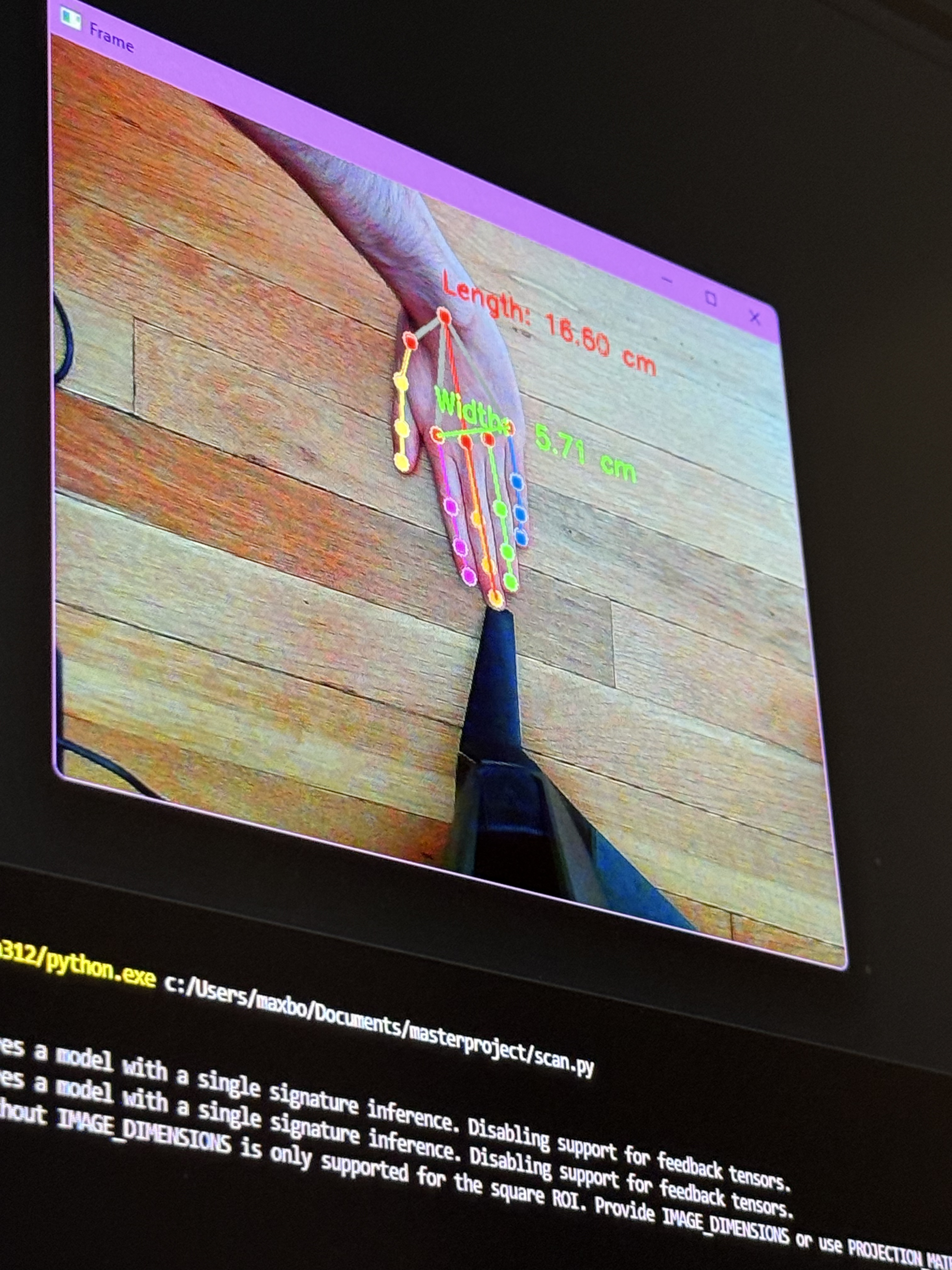

The experiment used an incomplete block structure where each participant tested 7 of the 14 mice. Each participant had their hand scanned, order was controlled to reduce fatigue and bias, and participants completed structured tasks and surveys for each mouse tested. This ensured that recommendations were grounded in comparable user experiences.

Three design goals guided every decision:

- Center subjective experience. Comfort and preference should meaningfully influence recommendations.

- Respect physical constraints. Hand size and grip style should guide similarity between users.

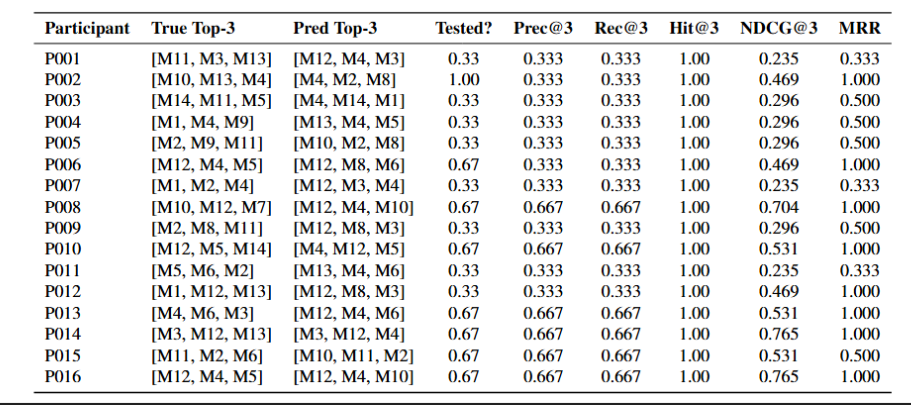

- Evaluate against real user choices. Recommendations should be validated against participant rankings.

A simple, interpretable recommendation pipeline

The system represents users using normalized hand measurements and grip style, identifies similar users based on proximity in feature space, aggregates preference signals from similar users, and produces a ranked list of recommended mice. The design prioritized simplicity and interpretability over model complexity.

The final system takes a new user's hand measurements and grip style, finds similar users based on those characteristics, weighs subjective preference and comfort to generate recommendations, and outputs a ranked list of gaming mice. Rather than claiming to find a "perfect" mouse, the system supports better-informed decision making by narrowing choices based on human-centered signals.

A replicable, human-centered framework for ergonomic recommendations

- Successfully completed an IRB-approved human-subject study with 16 participants across 14 devices

- Demonstrated that user similarity based on physical and grip features can meaningfully inform recommendations

- Produced recommendations that aligned with participant top choices above chance

- Provided a replicable framework for ergonomic recommendation systems applicable beyond gaming peripherals

What I learned

This project was overall successful in demonstrating a proof of concept — a pipeline where users get their hand scanned, test an array of mice, and report on how each felt, allowing that data to meaningfully inform the mouse selection process.

The study did face real limitations. The sample size of both participants and mice was small relative to the broader population of gamers, and there are many variables that 16 participants and 14 devices can't fully account for. Testing was also limited to purely aiming tasks, which captures one dimension of use but misses a lot of how people actually interact with a mouse day-to-day.

If this project were to continue, the next steps would be to expand the selection of mice and the number of participants, and to allow participants to use the mice for longer periods across multiple different environments — not just controlled aiming tasks.